Key Takeaways

- Helidon and Spring Boot services can work as peers when they share platform contracts instead of framework internals.

- CloudBank V5 demonstrates those contracts through Eureka discovery, JWT/OIDC security, APISIX routing, and OpenTelemetry telemetry.

- The Helidon customer service and Spring Boot account service each stay idiomatic while participating in the same operational system.

- A live CloudBank V5 deployment shows the mixed services registered in Eureka and visible in SigNoz telemetry.

I have been working with a microservices application that includes both Helidon and Spring Boot services. That mix is not unusual. Real systems accumulate useful services over time. Different teams choose different frameworks for good reasons. Some services benefit from Spring Boot conventions, Spring Security, and the Spring ecosystem. Others are a good fit for Helidon, MicroProfile APIs, and a smaller service runtime.

The interesting question is not which framework should win. In an application that already has both, the practical question is how the services collaborate.

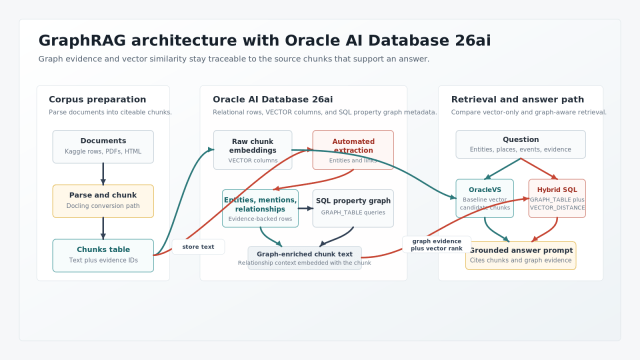

This article looks at that collaboration pattern through CloudBank V5, an example banking application that includes a Helidon customer service and Spring Boot services such as account. CloudBank and OBaaS give us a concrete deployment and operations environment, but they are not the point of the article. The point is interoperability: how Helidon and Spring Boot services can behave like one system when they agree on the right contracts.

In the CloudBank example, the shared contracts are:

- service discovery through Eureka

- authentication and authorization through Spring Authorization Server, OAuth2, OpenID Connect metadata, and JWTs

- external API routing and route-level scope checks through APISIX

- common telemetry through OpenTelemetry auto-instrumentation and SigNoz

Each service remains idiomatic inside its own framework. The integration happens at the system boundaries.

The Collaboration Model

Mixed-framework systems work best when the shared surface is small and explicit. The goal is not to make a Helidon service look like a Spring Boot service, or the other way around. The goal is to make both services understandable to the platform and to each other.

In CloudBank, that means a request can enter through APISIX, carry a JWT issued by the authorization server, resolve service targets through Eureka, reach either the Helidon customer service or the Spring Boot account service, and emit telemetry into the same observability pipeline.

That is the architecture in one picture. The gateway does not need to know the backend programming model. The registry does not need every service to use the same application framework. The authorization server publishes a token contract. The observability pipeline consumes telemetry in a common format.

The services collaborate because they share platform contracts, not because they share framework internals.

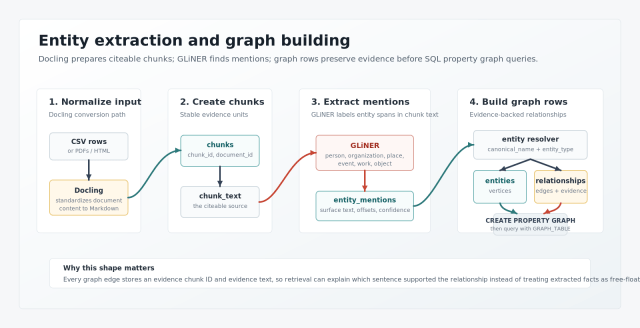

Discovery: One Registry for Different Runtimes

Service discovery is the first integration plane. A mixed-framework system needs a way to identify services without binding callers to pod addresses, Kubernetes internals, or framework-specific clients.

CloudBank uses Eureka as the service registry. The Spring Boot account service reaches Eureka through normal Spring configuration. Its application configuration gives the service a stable Spring application name and imports shared settings:

spring: application: name: account config: import: classpath:common.yaml

The shared Spring configuration supplies the Eureka client behavior:

eureka: instance: hostname: ${spring.application.name} preferIpAddress: true client: service-url: defaultZone: ${eureka.service-url} fetch-registry: true register-with-eureka: true enabled: true

The Helidon customer service reaches the same registry through its deployment configuration. Its Helm values identify the service as a Helidon workload and enable Eureka registration:

fullnameOverride: "customer-helidon"obaas: releaseName: "obaas" framework: "HELIDON"eureka: enabled: true instance: appname: "helidon-customer-service"

This is a good example of healthy interoperability. The two services do not need the same configuration syntax. They need the same operational outcome: each service has a stable identity and registers with the same discovery system.

That shared registry matters at the edge. CloudBank’s APISIX routes use Eureka discovery for upstreams. A route can target the ACCOUNT or CUSTOMER service identity without caring whether the implementation behind that identity is Spring Boot or Helidon.

The design rule is simple: let each framework configure discovery in its natural way, but make the service identity stable and visible outside the process.

In the live CloudBank deployment used for this article, Eureka shows Spring Boot ACCOUNT and Helidon CUSTOMER-HELIDON registered in the same service registry:

Security: Use the JWT as the Shared Trust Object

Security is the integration plane where framework differences can become painful if the architecture is not careful. Spring Security and MicroProfile JWT do not expose the same programming model. That is fine. They do not need to.

The shared object is the JWT.

CloudBank uses azn-server, a Spring Authorization Server service, to issue OAuth2 access tokens. APISIX exposes public authorization metadata and key endpoints:

/.well-known/* -> AZN-SERVER/oauth2/* -> AZN-SERVER

Protected API routes require scopes. The route script creates read, write, admin, test, and transfer routes for the CloudBank services. For the customer and account thread, the relevant pattern is:

/api/v1/account* -> ACCOUNT requires cloudbank.read/api/v1/customer* -> CUSTOMER requires cloudbank.read

APISIX uses the OpenID Connect plugin in bearer-only mode. The route configuration points to authorization server discovery metadata and preserves the bearer token for the backend service:

{ "openid-connect": { "discovery": "http://azn-server.<namespace>.svc.cluster.local:8080/.well-known/openid-configuration", "required_scopes": ["cloudbank.read"], "bearer_only": true, "unauth_action": "deny", "access_token_in_authorization_header": true }}

The gateway check is important, but it is only the first layer. The backend service still validates the token and enforces resource-specific rules. That is what keeps authorization close to the data.

The Helidon customer service uses MicroProfile JWT. Its configuration points JWT verification at the same JWKS endpoint that the rest of the system uses:

mp.jwt.verify.publickey.location=${CLOUDBANK_SECURITY_JWK_SET_URI:http://azn-server:8080/oauth2/jwks}

Inside the service, the resource can work with the authenticated principal and token claims using Helidon and MicroProfile APIs. A compact version of the scope-checking pattern looks like this:

@InjectJsonWebToken jwt;private boolean hasScope(String expected) { String scopes = jwt == null ? "" : jwt.getClaim("scope"); return Arrays.asList(scopes.split(" ")).contains(expected);}

The Spring Boot account service validates the same kind of token through Spring Security. CloudBank’s shared Spring configuration points the resource server at the same JWKS location:

spring: security: oauth2: resourceserver: jwt: jwk-set-uri: ${CLOUDBANK_SECURITY_JWK_SET_URI:http://azn-server:8080/oauth2/jwks}

Once Spring Security has authenticated the request, the account controller can make resource decisions with the Authentication object:

@GetMapping("/accounts")public List<Account> getAllAccounts(Authentication authentication) { if (!isPrivileged(authentication)) { if (authentication == null || authentication.getName() == null) { return List.of(); } return accountRepository.findByAccountCustomerId(authentication.getName()); } return accountRepository.findAll();}

The frameworks are different, but the contract is the same:

- the issuer is the authorization server

- the verification keys are published through JWKS

- the token carries the principal and scopes

- APISIX checks broad route-level authorization

- each service checks resource-specific authorization

That is a much cleaner integration point than trying to share framework-specific security code across runtimes.

Routing: A Consistent Edge Without Flattening the Services

APISIX gives the mixed system one external API edge. That edge can expose consistent routes and enforce coarse-grained route scopes while leaving each service free to use its own controller model.

The route script creates upstreams by service identity and discovery type:

{ "uri": "/api/v1/customer*", "upstream": { "service_name": "CUSTOMER", "type": "roundrobin", "discovery_type": "eureka" }}

That route is framework-neutral. APISIX needs a URI, an upstream service name, a discovery mechanism, and plugins. It does not need to know about JAX-RS annotations, Spring MVC annotations, CDI beans, or Spring components.

This gives the system a useful split of responsibility:

- APISIX controls the public surface area.

- Eureka resolves service identities.

- Spring Authorization Server defines the token contract.

- Helidon and Spring Boot services enforce the rules close to their resources.

CloudBank also shows why that split matters. The route script blocks external access to account journal endpoints by creating a deny-style route for /api/v1/account/journal*. The account service can still have internal endpoints for service-to-service work, but the gateway does not accidentally publish them as part of the public API.

This is the kind of boundary that lets teams mix frameworks without creating an accidental tangle. The outside API is deliberate. Internal implementation remains local.

APIs: Share Behavior, Not Annotations

The customer and account services do not need common controller annotations to interoperate. They need common API behavior.

The Helidon customer service exposes its API with JAX-RS style resources:

@Path("/api/v1/customer")@Authenticatedpublic class CustomerResource { @GET @Produces(MediaType.APPLICATION_JSON) public Response getCustomers() { ... } @POST @Consumes(MediaType.APPLICATION_JSON) public Response createCustomer(Customer customer) { ... }}

The Spring Boot account service exposes its API with Spring MVC:

@RestController@RequestMapping("/api/v1")public class AccountController { @GetMapping("/accounts") public List<Account> getAllAccounts(Authentication authentication) { ... } @GetMapping("/account/getAccounts/{customerId}") public ResponseEntity<List<Account>> getAccountsByCustomerId( @PathVariable String customerId, Authentication authentication) { ... }}

Those programming models are different. The interoperability question lives one level up:

- What does the authenticated principal represent?

- Which scopes allow reads, writes, admin operations, tests, and transfers?

- Which paths are public?

- Which paths are internal?

- Which service owns each business rule?

- What JSON shapes and status codes do callers see?

Once those answers are stable, framework annotations become implementation details. A Helidon service can protect customer records using MicroProfile JWT and JAX-RS. A Spring Boot service can protect account records using Spring Security and Spring MVC. Clients and gateways interact with the API contract, not the controller framework.

This is also where integration tests should focus. A good mixed-framework test does not assert that both services are built the same way. It asserts that the same token identity produces consistent business behavior at both endpoints, that the same scope vocabulary is honored, and that callers see predictable HTTP results. That kind of test protects the contract without freezing either team inside the other team’s framework choices.

Observability: One Operational View

Interoperability is not complete if the services are only integrated at request time. They also need to be operable together.

CloudBank uses OBaaS OpenTelemetry support as the demonstration path. The Spring Boot account values enable telemetry:

otel: enabled: true

The same values file identifies account as a Spring Boot workload:

obaas: framework: "SPRING_BOOT"

The Helidon customer values identify the customer service as a Helidon workload:

obaas: framework: "HELIDON"

The useful idea is platform-level instrumentation. Application teams should not have to invent a different tracing pipeline for each framework. With the OBaaS Java auto-instrumentation path, the platform can inject instrumentation and send traces, metrics, and logs toward the shared observability backend.

For day-two operations, this is a major part of the integration story. When a support engineer follows a request, the framework boundary should not become an observability boundary. A request to customer and a request to account should be visible in the same telemetry system, with service names, status codes, latency, and logs available from one operational view.

The SigNoz Services dashboard shows the same operational view from the telemetry side. In this run, the services list includes account, customer, and customer-helidon, with latency, error-rate, and operations-per-second columns coming from the shared OpenTelemetry pipeline:

SigNoz also receives trace data from the CloudBank request path. The deployment did not produce a single application trace containing both Spring Boot account and Helidon customer-helidon; the services are demonstrated here as separate participants in the same trace store. The first SigNoz capture shows a representative Helidon customer trace:

The second SigNoz capture shows a representative Spring Boot account trace from the same backend:

The same run validated the security path with live HTTP calls:

gateway_metadata=200account_no_token=401account_read_token=200helidon_no_token=401helidon_read_token=200

Those checks are intentionally contract-level checks. They do not prove that Helidon and Spring Boot use the same security implementation. They prove something more useful for interoperability: the same issuer and scope vocabulary can protect both service styles, and a scoped token can reach both the Spring Boot account API and the Helidon customer API.

Design Lessons Beyond CloudBank

CloudBank V5 is a convenient example, not a special rulebook. The same pattern applies to other systems that mix Helidon and Spring Boot.

First, give every service a stable identity. That identity should appear in discovery, route configuration, logs, traces, and dashboards. If the platform can identify the service consistently, the implementation framework can stay behind the boundary.

Second, standardize on a token contract. A shared issuer, JWKS endpoint, principal meaning, and scope vocabulary are more important than shared security code. Helidon can validate the token with MicroProfile JWT. Spring Boot can validate it with Spring Security. The architecture stays coherent because both services trust the same issuer and interpret the same claims.

Third, put public exposure at the gateway and data protection in the service. APISIX is a good place to define public routes and route-level scope requirements. It is not a substitute for service-side authorization. The backend still owns resource-level decisions.

Fourth, make telemetry a platform contract. Even when requests do not cross directly between Helidon and Spring Boot services, both frameworks should emit OpenTelemetry data into the same backend so operators can inspect each service from one place.

Finally, resist the urge to flatten the frameworks. Shared libraries can help inside a family of services, as CloudBank does with common Spring configuration for Spring Boot services. But Helidon and Spring Boot interoperability is stronger when the cross-framework contract is based on HTTP, JWTs, discovery, routing, and telemetry instead of a forced common programming model.

Closing

Helidon and Spring Boot can coexist cleanly when the architecture treats frameworks as local implementation choices and platform contracts as the shared language.

In CloudBank V5, the Helidon customer service and the Spring Boot account service collaborate through ordinary, durable boundaries: Eureka for discovery, Spring Authorization Server for JWTs and OpenID Connect metadata, APISIX for consistent routing and route scopes, and OpenTelemetry for a shared operational view.

That is the lesson I would carry into any mixed-framework system. Do not integrate frameworks by making them imitate each other. Integrate services by making the boundaries explicit.

You must be logged in to post a comment.